News

Noise [part 1]

02 July 2021

Article by James Zjalic (Verden Forensics) and Henk-Jan Lamfers (Foclar)

Problem

Noise is an inherent part of digital imagery, and signal processing in general. It is commonly defined as the undesired region of a signal and in the majority of cases its random nature means that it does not impact the desired region of an image to the degree that misinterpretation of such would occur in isolation. With that being said, if it is not removed and other enhancement processes are applied, it is likely that the visibility of the noise will increase [1], which may then result in issues relating to interpretation. Even if other enhancement processes are not to be applied or there is no risk of misinterpretation, the fact remains that it reduces the overall quality of digital imagery, and so any steps which can be taken to reduce or remove noise are often desirable.

Cause

Noise is most commonly obtained during the acquisition stage, although it can also be added to a signal during any subsequent transmission stages due to channel interference. As the light signal which enters a camera lens must undergo a series of processes prior to being encoded as a digital image or video, each process adds a degree of noise to the original signal, culminating in that which appears visible to us as noise within the final imagery. These processes include the conversion of light to electrons and the amplification of the electronic signal, and the degree of noise is dependent on a number of factors, including the light levels and efficiency of the internal electronics of the capture system. This can be easily evidenced by reviewing low light level captures, as they generally contain more noise than those captured in more brightly illuminated environments as the amount of signal amplification required is higher.

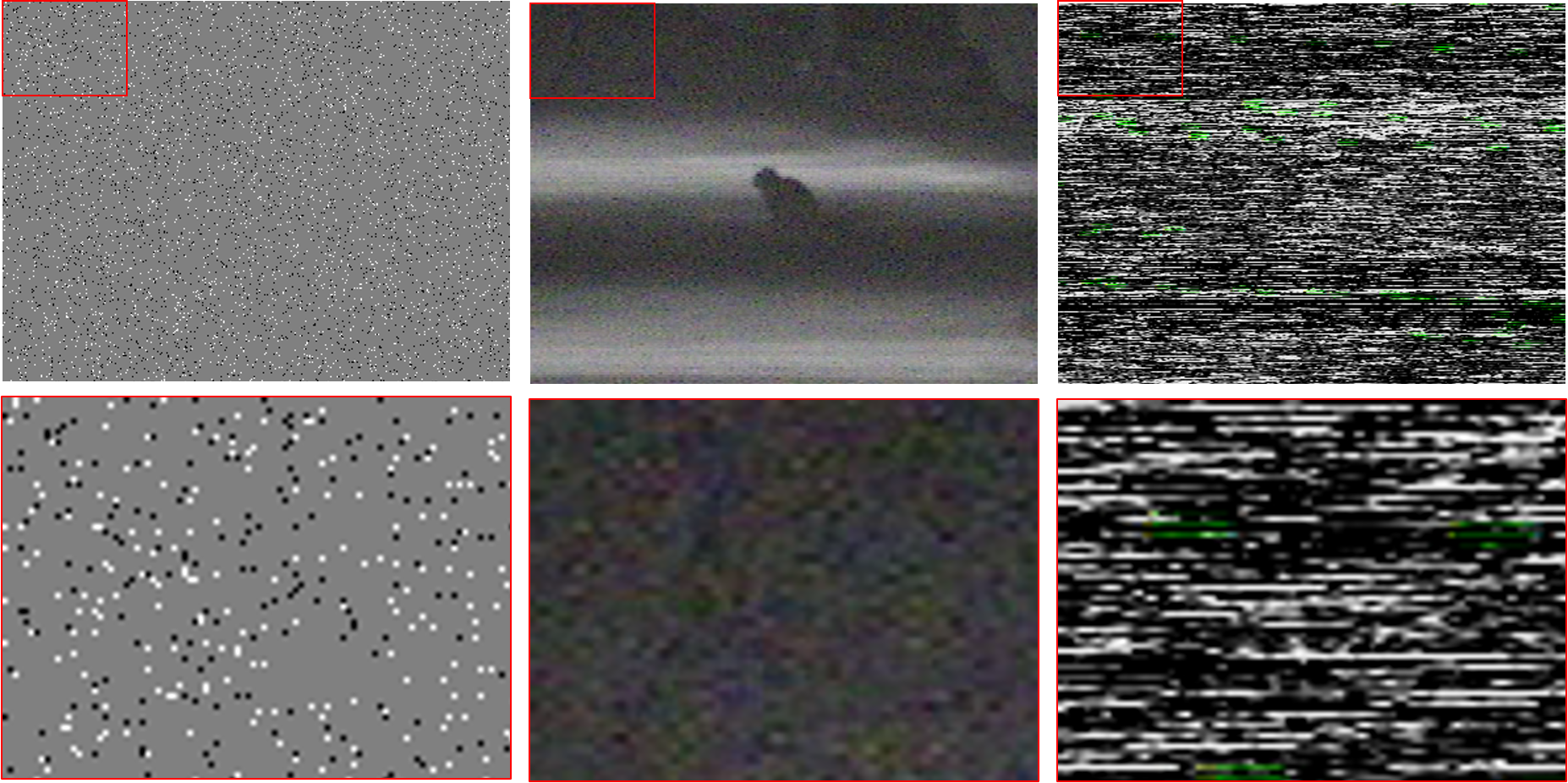

The most commonly encountered types of noise (see Figure 1) are impulse noise (also known as salt and pepper noise due to its speckled black and white appearance), pattern noise and line noise. Impulse noise is characterised by the visibility of random black and white pixels throughout an image, caused by dead pixels in the imaging sensor or the addition of electronic noise prior to encoding. Pattern noise is visible as coloured pixels generated by the variation in the three colour sensors or the analogue to digital conversion process. When there is a composite analogue connection between a camera and a recorder, there is only a single channel available to transmit the data. The colour information is therefore encoded in a high frequency carrier wave, and the signal digitised once it reaches the recorder. The overlap between the high frequency luminance and colour information causes misinterpretation by the frame grabber of the high frequencies as colour which results in colour distortion. This can also occur in black and white captures. Line noise is visible as vertical or horizontal light or dark lines and is generally caused by synchronisation issues in the sensor [2].

Solution

There are various processes used to remove noise, however, considerations must first be made as to whether the desired signal exists within a video or still image, as although the approaches to each are similar, there can be differences in the specifics.

When noise exists within a video, the possibility exists that a process can be applied to a number of frames in series to increase the signal to noise ratio. For example, if the desired region is stationary (such as the number plate of a parked vehicle), the frames can be averaged, thus reducing the impact of random variations (or noise) between frames. This method is of no use if the desired region is moving as the natural variation between frames would be too great, in which case the exhibit would need to be treated as a still image, unless stabilisation can limit the degree of spatial difference between sequential frames.

The approach for still images is based on a similar theory, but the spatial representation of the image is considered and averaging applied within neighbourhoods of pixels rather than between frames. This is based on the premise that there is expected to be a degree of correlation between neighbouring pixels representing the desired region. In contrast, random noise within said region is uncorrelated with such and is therefore reduced. As the method is based on the assumption that neighbouring pixels are similar, the theory falls down at object edges (or within high-frequency regions) and reduces the sharpness of such [3].

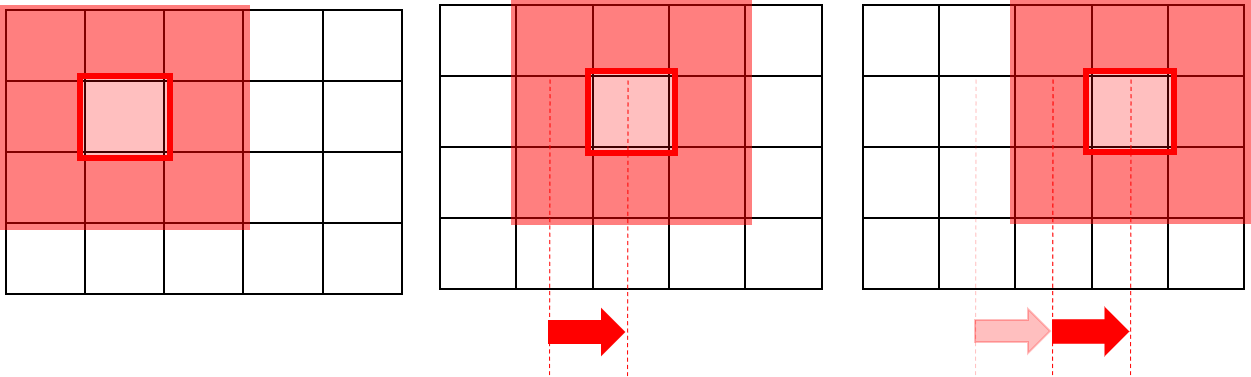

If one considers that neighbourhoods of pixels 3 x 3 in dimensions (Figure 2) are used for the procedure (square blocks composed of 3 pixels in width by 3 pixels in height), it is the pixel at the centre of this matrix (or kernel) for which a new value is generated. The square then moves one pixel across, and the process repeated. It will continue in this manner (moving down to a new row once the end of the current row is reached) until a new image is created consisting of the new pixel values. Kernels are generally composed of odd numbers of pixels and are centred around a single-pixel (e.g. 3x3, 5x5, 7x7), where the larger the size of the kernel, the more processing power required, and thus, the more noise reduction (and thus loss of feature detail) that will occur. These noise reduction processes are a type of low pass filter as they allow low-frequency information to pass whilst reducing high-frequency information [4].

Conclusion

Noise reduction can improve the overall quality imagery and optimise further processes, but one should always balance the need for a reduction in noise with the associated reduction in object edges (and therefore feature detail). With that being said, there are some approaches that work better than others in maintaining detail, and the next blog in this series will delve deeper into the specific solutions and implementations available for noise reduction in digital imagery.

References

[1] S. Ledesma, “A proposed framework for image enhancement,” University of Colorado, Denver, 2015.

[2] J. C. Russ, Forensic Uses of Digital Imaging. CRC Press, 2001.

[3] R. C. Gonzalez and R. E. Woods, Digital Image Processing, 3rd Edition. Pearson, 2007.

[4] Mark Nixon and Alberto Aguardo, Feature Extraction & Image Processing. Newnes, 2002.