News

Introduction to Imagery Authenticity

10 March 2021

Article by James Zjalic (Verden Forensics) and Foclar

Importance of Authentication

The justice system is built on the principle of fairness, as defined by the term ‘justice’, or the quality of being just, impartial or fair [1]. As decisions within the system are based on evidence, it is essential that all exhibits are accurate representations of what they purport to be, so much so that charges can be brought against any party that attempts to manipulate evidence, regardless of if this is given verbally or submitted for analysis.

We live in a time when the world is dominated by digital imagery, and its advantages to the justice system are clear. It can provide compelling visual evidence of events, something which was not possible just fifty years ago. It is also not subject to the fragility of the human cognitive system, which can bias an account or even omit important elements of such [2]. Despite the inherent advantages of digital imagery to the justice system, in the age of deep fakes and photoshopping the potential for manipulation must always be considered prior to any work being performed, for if later down the line a video is found to be manipulated, all work and inferences drawn from the imagery must be considered unreliable and therefore inadmissible. Although the effects may not be as pronounced, an initial triage of the evidence in the form of a more basic authentication examination can also ensure the most original exhibits are being used for enhancement or analysis, thus eliminating the potential for quality degradation. At the most fundamental level, this could involve analysis to determine the software was used to encode the exhibit, for it is found to be a screen capture or conversion it can be accepted that it is not the most original version. Whether the most original version can be made available is another matter, but it should be an experts duty to be a gatekeeper, doing what they can to ensure the most original imagery available is used.

Defining Authenticity

The term ‘authentication’ is the process of substantiating that the asserted provenance of data is true [3], and as such, an authentication examination seeks to determine if a recording is consistent with the manner in which it is alleged to have been produced [4]. Only by establishing the originality of a recording can it be conclusively accepted that a recording has not been edited, as if this is found to be the case, the possibility of deliberate tampering is eliminated [5]. An artefact that is consistent with an edit may have an innocent explanation, but a lack of evidence with regards to editing cannot be considered to be proof that editing has not taken place without establishing that the recording is an original. As the term ‘original’ is relative to the source, the source which is being considered must first be defined based on information provided. For instance, a very basic example would be if a recording is provided which is purported to have been captured using a CCTV system, but the content contains a recording of a monitor displaying the CCTV by a mobile device, it can be accepted that it is inconsistent with an original recording. The potential, therefore, exists that the recording displayed on the screen has been manipulated using video editing software and is therefore providing a false account of the events that occurred.

Now imagine the same scenario, but the recording content is consistent with the capture from a CCTV system. Further analysis is therefore required, which could be in the form of a review of the metadata to determine if details of the software used for encoding the recording are present within. These examples can be performed using freeware suites in under 30 seconds, but are extremely basic and thus are unlikely to reveal any traces of editing if the would-be manipulator has some knowledge of digital imagery. It is, therefore, essential that further techniques are utilised to ensure edited recordings do not slip through the net.

Manipulation detection tool

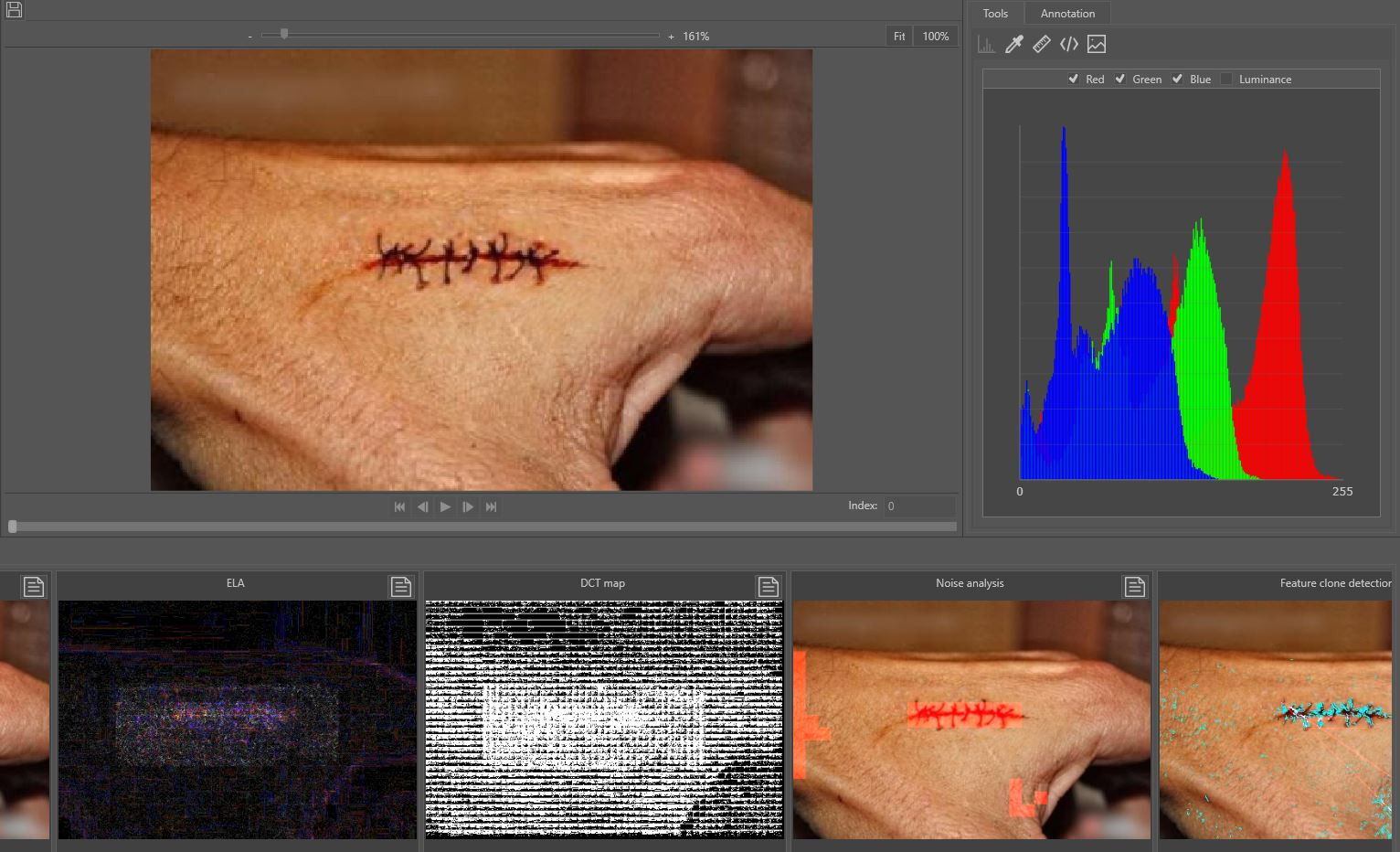

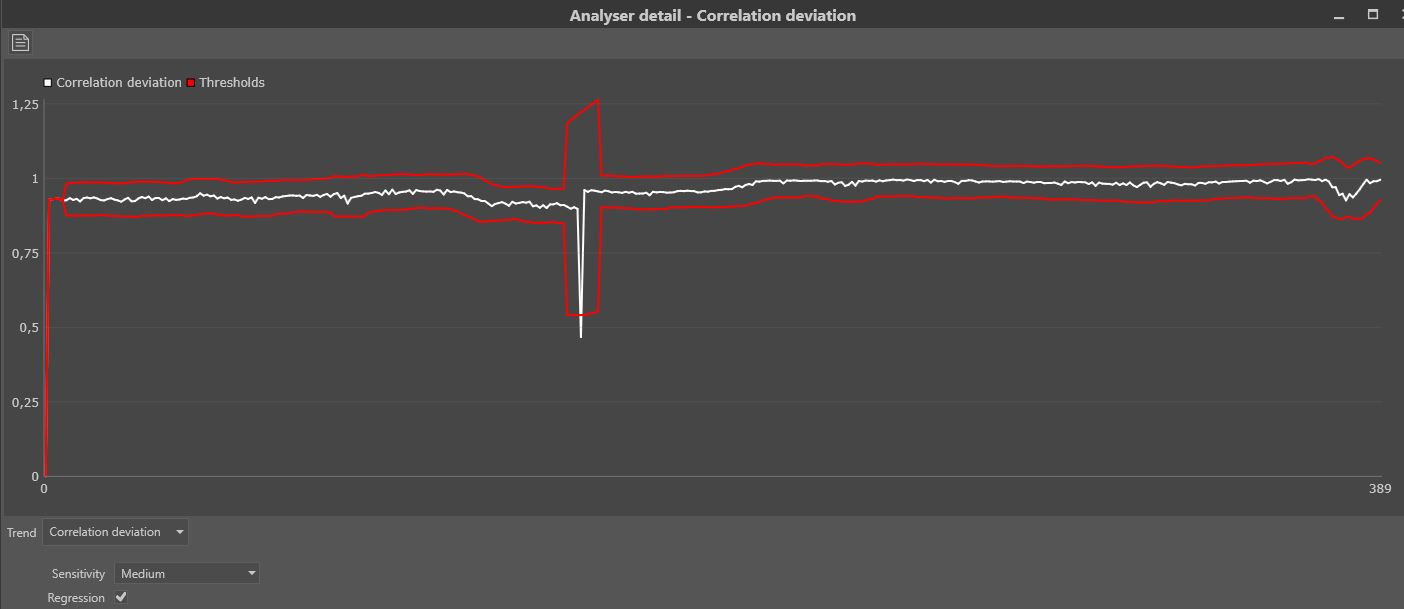

Mandet is a software tool for manipulation detection in images and video and provides a broad range of different tools. One of the features is the image content filtering which searches for statistical deviations within the image or video frame (Figures 1 and 2). It also has video trend analysis, which searches for statistical deviations in a specific extracted signal through the whole video. The DeepFake detector is also one of the unique features. Next to the content filtering/analysers, it also includes a hexadecimal viewer in which a file can be investigated at a byte level. Furthermore, it contains metadata extraction, camera ballistics, and some basic tooling.

While the job of searching for manipulations within imagery is a time-consuming job, the software is developed in such a way that it works as efficiently as possible. It is important that the authenticity of an image or video file is determined, but to do this, the tools used to perform analysis should be as efficient as possible, considering the amount of imagery used in forensic cases. Mandet is a tool that provides this in a forensically sound way by automatically generating a working copy and calculating the hashes of the files which will be visible in the report.

It is also possible to do a deep dive into the functionality. Many of the filters have parameters that can be adjusted, and the video trend analysers provide a graph from the derived signal and its automatically determined thresholds.

Conclusion

It is of the utmost importance that imagery presented within the justice system can be accepted as an accurate account of what it purports to represent, as it is evidence that triers of fact base decisions and which defendants are convicted or released upon. No party within the system is better placed to opine on matters of authenticity than a digital imagery examiner, and so it is their responsibility to do what they can to prevent edited imagery from reaching the courtroom.

References

[1] Merriam-Webster, “justice.” [Online]. Available: https://www.merriam-webster.co....

[2] A. Memon, S. Mastroberardino, and J. Fraser, “Münsterberg’s legacy: What does eyewitness research tell us about the reliability of eyewitness testimony?,” Appl. Cogn. Psychol., vol. 22, no. 6, pp. 841–851, Sep. 2008, doi: 10.1002/acp.1487.

[3] SWGDE, “Digital and Multimedia Evidence Glossary Version 3.0.” Jun. 20, 2016.

[4] SWGDE, “Best Practices for Digital Forensic Video Analysis,” p. 18, 2018.

[5] A. J. Cooper, “Detection of copies of digital audio recordings for forensic purposes,” PhD Thesis, The Open University, 2006.